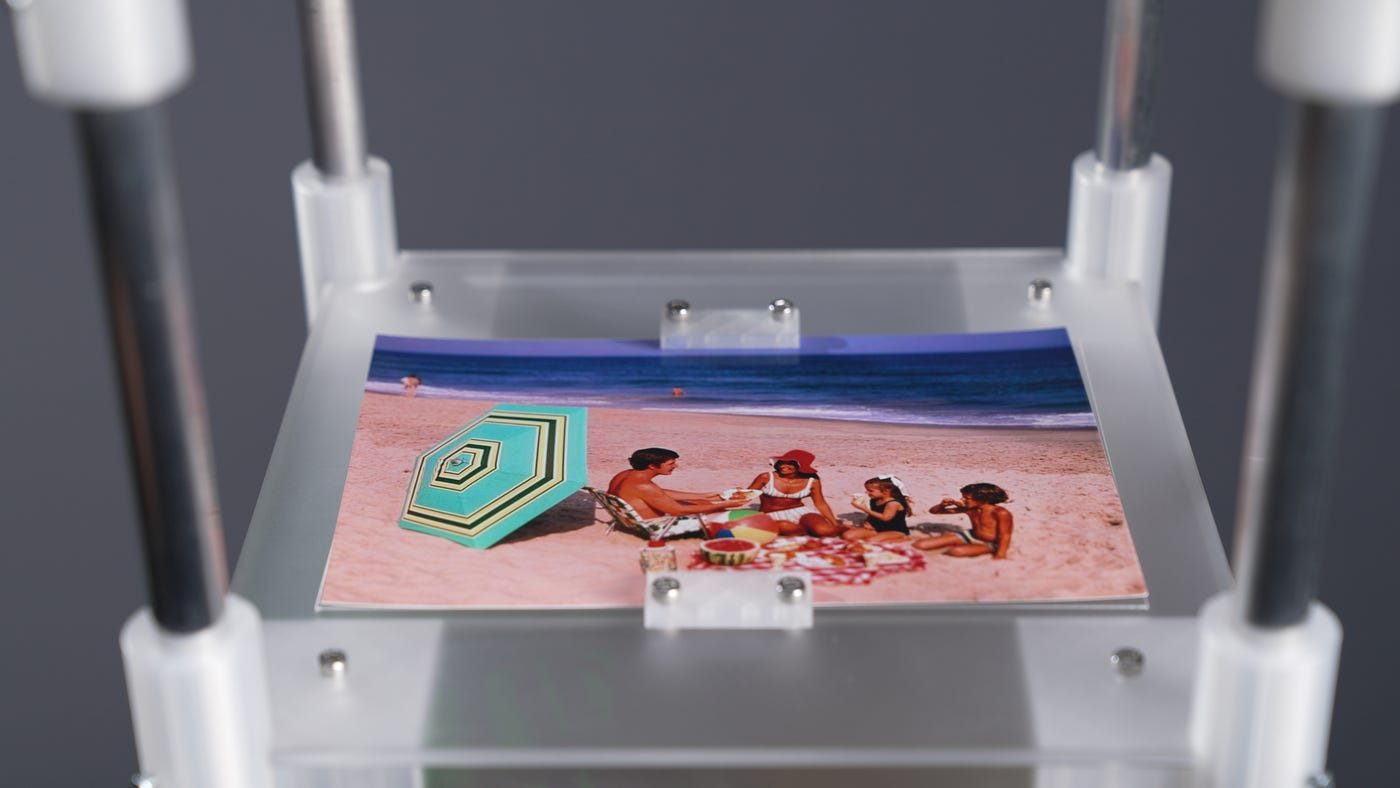

MIT Media Lab has developed a prototype that uses generative AI to interpret and distil the contents of a photograph into a fragrance. The Anemoia Device, described as a “scent-memory machine”, is structured in three vertical parts. You place an analogue photograph at the top. An AI-powered system in the middle analyses the image and lets you shape a prompt using three physical dials. At the base, a set of pumps draws from 50 fragrance reservoirs to produce a custom scent.

Researcher Cyrus Clarke frames the entire process as distillation. You take a dense, layered memory artefact and compress it into something concentrated and sensory. His focus sits on “anemoia” – nostalgia for a time you’ve never experienced. While the device can translate any photographic memory, he’s particularly interested in those unlived or inherited moments: childhood photos, family archives, recipes passed down. Familiar but not yours.

The system uses a vision-language model to interpret the image, but the user directs the outcome. First, you choose a point of view, isolating a subject within the frame. That might be a person, or it might be an object such as a tree or bicycle. Next, you define lifecycle stage. If it’s a person, child or elderly. If it’s an object, raw, in use or decay. Finally, you assign an emotional tone, selecting words that steer the character of the scent.

In one trial, a participant uploaded an archival photograph of a couple eating fruit on garden steps. They selected the fruit as the subject, set it to “in use” and chose “calm” as the mood. The machine translated that combination into scent.

Because smell is still the underused sense in experiential work. We obsess over visuals, spatial design and soundtracks, yet the sense most directly linked to memory rarely gets treated as a strategic layer.

For brands building installations, retail moments or cultural activations, this opens a different door. Imagine heritage archives turned into evolving scent landscapes. Imagine community-driven exhibitions where contributors upload their own imagery and shape the atmosphere in real time..

It raises a useful question for anyone designing immersive spaces, are you building environments people see, or environments they actually remember?

Inspiration by Mark

See link

Also Check out my article